AI in SOC 2 Audits: Efficiency, Accuracy, and the Road Ahead

November 30, 2025 | 8 min read

Introduction: The Rise of AI in SOC 2 Audits

Amid the excitement and uncertainty surrounding AI and its short- and long-term impacts on business, the technology has rapidly become a cornerstone of operations. By mid-2024, 78% of organizations were using AI in at least one function, up from 55% the year before, with 71% regularly applying generative AI. (Source: McKinsey – State of AI 2024)

Gartner projects that generative AI could boost productivity by nearly 25% and cut costs by 16% within 18 months. With such promising returns, AI is increasingly making its way into GRC, audit, attestation, and certification activities. A 2025 global survey by AuditBoard and Panterra Research found that 72% of mature organizations, those with advanced GRC frameworks are embedding AI across risk and compliance functions.

The SOC 2 attestation, developed by the AICPA, is a widely accepted attestation report used to communicate whether a service organization’s internal controls are suitably designed (Type 1) and operated effectively (Type 2) according to the Trust Services Criteria: Security, Availability, Confidentiality, Processing Integrity, and Privacy. Organizations pursue SOC 2 Type 2 attestation primarily to provide assurance to their customers and business partners about relevance of the internal controls to the industry best practices.

Because SOC 2 attestation is technology-agnostic, it is routinely applied to cloud-hosted and AI-enabled systems. Practitioners perform procedures such as inquiry, observation, inspection, and reperformance, often supported by GRC platforms. Today, many firms are also leveraging AI and advanced analytics to streamline risk assessment, test selections, and evidence organization, while maintaining professional judgment and audit quality.

The use of AI by practitioners within SOC 2 attestation engagements is reshaping from assessing risks up to evaluating evidence, without altering the underlying Trust Services Criteria or attestation standards. As AI models mature, auditors are applying AI to accelerate population analysis, sampling, control mapping, and evidence synthesis that once required extensive manual effort. Crucially, professional judgment and the requirement for sufficient appropriate evidence remain unchanged: firms must govern AI use, assess the reliability and accuracy of AI-generated outputs, and corroborate results through established procedures such as inquiry, observation, inspection, and reperformance.

Business Case for AI in SOC 2 Compliance

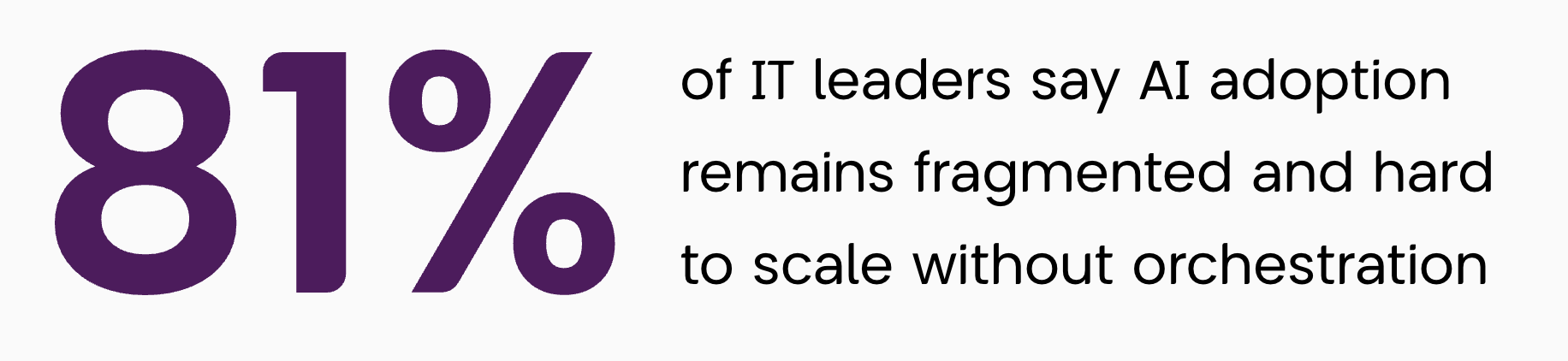

Organizations are increasingly turning to AI because today’s SOC 2 attestations are difficult to scale with speed, accuracy, and consistency.

AI adoption in SOC 2 reflects a broader shift toward continuous assurance, data-driven risk management, and rising client expectations for real-time trust reporting.

Source: ISACA Research, 2024

The first constraint is talent economics. Onboarding, developing, and retaining experienced professionals is costly, and their time is scarce. AI can capture institutional knowledge, helping new staff ramp up faster while enabling experienced team members to focus on higher-judgment areas, without displacing professional judgment.

The second constraint is a fast-changing business environment. Cloud releases, architectural shifts, policy updates, and new data flows evolve rapidly. AI supports ongoing change surveillance and impact mapping so auditors and management can stay current on which controls, Trust Services Criteria, and test procedures are affected. This coordination works best when audit, IT, and operations teams co-own data pipelines and control processes, turning AI into a shared assurance platform rather than a standalone tool.

Data dynamics create a third constraint. Distributed systems produce vast, diverse evidence sets, and manual analysis can strain both accuracy and coverage. AI can assist with population-completeness checks, accelerate artifact classification and correlation, and triage anomalies so reviewers focus where risk is greatest. These same capabilities lay the foundation for continuous readiness where evidence gathering, and control validation become ongoing, data-driven processes rather than periodic reviews.

Time pressure forms the fourth. When months separate fieldwork from report issuance, business processes and control environments may shift. AI can compress cycle time by streamlining PBC intake, normalizing evidence, and drafting consistent workpapers — helping teams deliver timely, review-ready documentation even after the examination period ends.

Finally, risk sensing for emerging technologies is becoming essential. As organizations introduce AI/ML components, new integrations, or novel data uses, AI can help detect changes early, surface related risks (access, governance, privacy), and suggest scoping questions and test procedures for control validation.

Taken together, these constraints make a business case for AI: it enhances evidence collection and evaluation, improves consistency and speed, and supports continuous readiness while the Trust Services Criteria, attestation standards, and requirements for sufficient appropriate evidence remain unchanged. For clients, this means faster insights, richer analysis, and greater transparency strengthening trust in both the attestation process and the resulting report.

To realize these benefits, firms must establish clear governance assigning accountability for AI outputs, validating models, and embedding oversight within existing quality control systems.

Insights on AI Usage in GRC and Audit

Artificial Intelligence is rapidly reshaping the landscape of Governance, Risk, and Compliance (GRC). Organizations are increasingly using AI to enhance efficiency, strengthen risk management, and maintain continuous compliance. Leading research and advisory firms note that, although adoption is still maturing, AI is already delivering measurable results — from accelerating audit readiness and reducing manual effort to elevating the overall quality of compliance oversight. The insights below highlight adoption patterns, leading use cases, and measurable outcomes most relevant to SOC 2 focused teams.

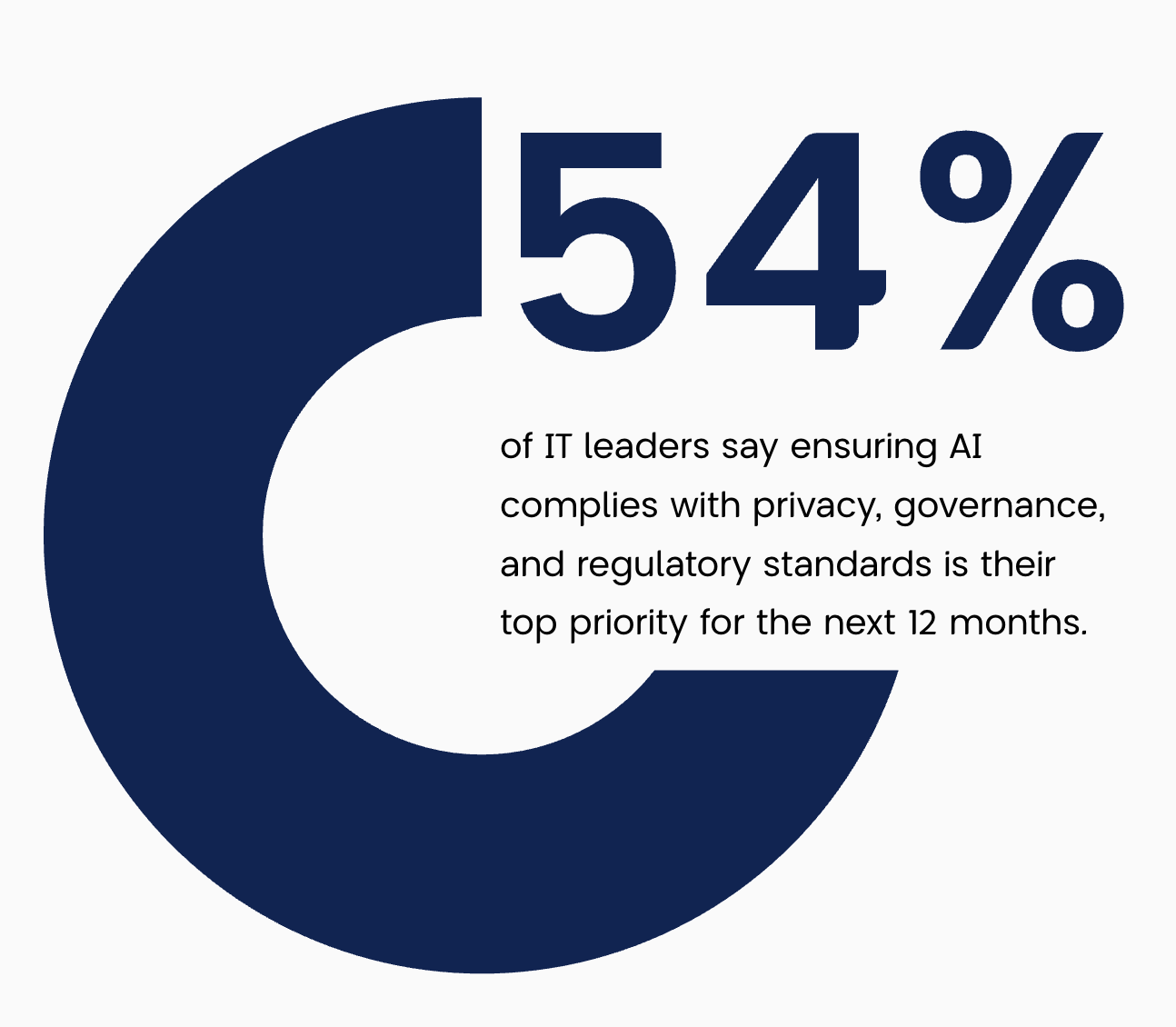

Most GRC teams are leaning in, either actively exploring AI or mapping out adoption plans. A smaller cohort has fully integrated AI to date, while only a modest share remains on the sidelines.

Most GRC teams are leaning in, either actively exploring AI or mapping out adoption plans. A smaller cohort has fully integrated AI to date, while only a modest share remains on the sidelines.

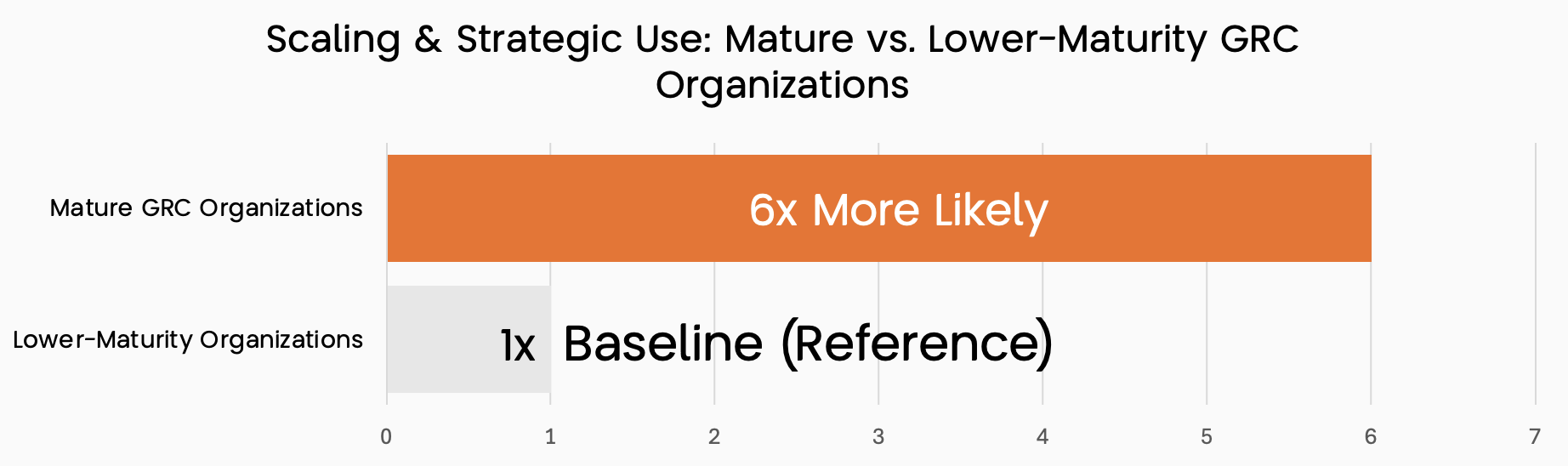

Mature GRC organizations are far more likely to scale AI and apply it strategically compared with lower maturity programs at baseline. They show roughly six times the likelihood of reaching scaled, strategic use, underscoring the impact of program maturity.

Source: AuditBoard / Panterra (2025) (GRC Report)

Within companies, AI is being integrated directly into core GRC operations automating policy monitoring, detecting anomalies across large data sets, and predicting emerging risks before they escalate. Advanced natural language and machine learning models now help compliance teams interpret complex regulations, assess third-party risks, and track adherence in real time. The result is a shift from reactive compliance toward proactive governance, where insights generated by AI support faster decisions, stronger accountability, and a more resilient risk culture.

Limitations and Challenges of AI in SOC 2 Attestation

While AI in SOC 2 audits offers clear efficiency gains, it has defined boundaries. Scoping, evidence evaluation, and audit opinion formation still depend on human expertise. These limitations highlight why professional judgment, audit ethics, and human oversight remain essential in the assurance process.

Source: ISACA Research, 2024

1. Scoping and Materiality

Defining what falls within the SOC 2 audit scope requires professional judgment and context.AI can map systems and analyze risk data but cannot determine which controls, processes, or risks are significant enough to include in a SOC 2 engagement. Human expertise ensures accuracy and relevance under the Trust Services Criteria.

2. Evidence Evaluation

AI tools help collect and organize evidence across cloud systems yet deciding if that evidence is sufficient and appropriate remains a professional responsibility.

Auditors must verify data sources, assess reliability, and confirm that AI-generated results trace back to credible, verifiable evidence a key element of any SOC 2 Type 2 report.

3. Professional Skepticism

AI can flag anomalies in large data sets, but it cannot apply professional skepticism the critical thinking needed to question management explanations or resolve inconsistencies.

Auditors must evaluate whether AI-driven findings align with SOC 2 compliance requirements and business reality.

4. Ethics and Independence

Ethical standards and independence form the foundation of any SOC 2 attestation.

AI may identify data bias or potential conflicts, but it cannot uphold audit ethics or professional independence. Auditors must oversee models, test for bias, and document assumptions to maintain trust and integrity in the engagement.

5. Human Trust and Communication

SOC 2 is ultimately built on trust. AI cannot replace human judgment, communication, or client relationships.

Auditors interpret results, explain findings, and reinforce confidence in the SOC 2 report, ensuring transparency and accountability.

In summary, AI strengthens efficiency and consistency in SOC 2 audits, but it cannot replace human expertise, ethics, or professional skepticism the core principles that safeguard audit quality and client trust.

Conclusion

AI in SOC 2 attestation isn’t about rewriting the rules it’s about improving how they’re applied. Firms that use AI responsibly gain lasting advantages: stronger evidence, sharper judgment, and faster audit readiness without compromising trust.

The path forward is not more technology but better coordination aligning tools, data, and people around one goal: reliable, real-time assurance in the digital economy. This requires leadership focus, clear AI governance, and continuous learning.

Ultimately, AI in SOC 2 audits marks the next step in assurance where efficiency and ethics work together, and human judgment remains the foundation of trust.

How PKF Antares Can Help

SOC 2 readiness is not achieved by software alone. SOC 2 compliance automation platforms accelerate progress, but their value is realized only when guided by professionals who understand both the letter and the intent of the Trust Services Criteria. For organizations facing similar pressures from enterprise buyers or regulators, the path forward is clear: establish executive ownership, run a risk scoping workshop before activating any compliance platform, and align resources early to avoid control sprawl. SOC 2 readiness is most efficient when technology is configured to serve the organization’s workflows, not dictate them. Automation accelerates, expertise steers.

Get in touch

Subscribe for our latest insights

Sign up for more of our thought leadership and analysis—sent straight to your inbox.

Sign Up